Today I added SSL to my Apache webserver, running on Ubuntu 12, on an AWS instance. This was the first time I’d ever worked with SSL or certificates and it was fairly straightforward though it seemed daunting at first. Ran into a few problems that the Internet didn’t solve for me, so I thought I’d share.

My sequence end to end:

1. When I bought my domain name through Namecheap, it came with an SSL certificate, which I had never activated. Namecheap apparently subcontracts SSL services to a company called Comodo. You’ll presumably need to purchase a trusted certificate from an authority like Comodo or DigiCert if you don’t already have one for your production site.

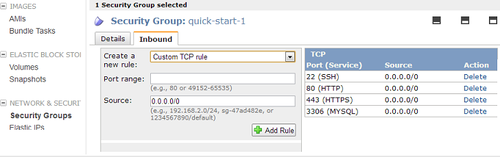

2. In preparation for using SSL, I added HTTPS (port 443) to my EC2 Security Group to allow traffic through the firewall. This is in the AWS Management Console ( EC2 -> Security Groups in left nav -> select the group in use by your server instance, click Inbound tab, HTTPS is listed in the dropdown of pre-configured rules you can add). Here’s what it looks like after you add it:

3. I followed the instructions here to generate a private key (myprivatekey.key) and csr file (myserver.csr), saving them to a special directory for safekeeping. Basically this consisted of running:

openssl req -nodes -newkey rsa:2048 -keyout myprivatekey.key -out myserver.csr

Note that I’m using Apache 2 with mod_ssl. Instructions for other OS/webserver configurations are here .

4. I submitted my generated csr to Namecheap through their web form, clicked on an approval email they sent, then received my certificate files by email from Comodo. They sent me a zip containing 2 files:

- www_myserver_com.crt

- www_myserver_com.ca-bundle

5. Next I roughly followed the instructions here to setup the SSL files in production. For other OS/webserver configs, you can look here. For my setup, this consisted of

- a. uploading myprivatekey.key file and the zip with the certificate files to AWS, then unzipping the certificate files

- b. copying the private key under /etc/ssl/private

- c. copying the 2 unzipped certificate files under /etc/ssl/certs

6. Rather than mucking with Apache’s default config files, I typically load my own Apache .conf file that lives in: /etc/apache2/conf.d/mydomain.conf . To enable SSL, I edited mydomain.conf file, adding the block below.

#remove the space after the < brackets in the Virtual Host open/close tags.

# Tumblr forces me to add it.

<VirtualHost *:443>

SSLEngine on

ServerName myserver.com

SSLCertificateKeyFile /etc/ssl/private/mysslprivatekey.key

SSLCertificateFile /etc/ssl/certs/www_myserver_com.crt

SSLCertificateChainFile /etc/ssl/certs/www_myserver_com.ca-bundle

</VirtualHost>

I already had entries for port 80, so I just had to add port 443. The Document Root and LogFile locations were inherited from elsewhere in my config, which was fine for my purposes.

7. Enabled ssl for Apache by symlinking the available module under the enabled modules directory, then restarted apache:

$ pushd /etc/apache2/mods-enabled/

$ sudo ln -s ../mods-available/ssl.conf ssl.conf

$ sudo ln -s ../mods-available/ssl.load ssl.load

$ sudo /usr/sbin/apachectl restart

8. restarted apache and tailed my access.log and error.log to check for problems.

tail -n30 /var/log/apache2/access.log

tail -n30 /var/log/apache2/error.log

Note that I had originally included the following lines in my .conf file (without the # signs to comment them out), but they caused problems.

#NameVirtualHost *:80

#NameVirtualHost *:443

#Listen *:80

#Listen *:443

I removed these lines because they broke Apache restart and yielded these errors:

[Wed Jun 26 23:04:57 2013] [warn] NameVirtualHost *:443 has no VirtualHosts

[Wed Jun 26 23:04:57 2013] [warn] NameVirtualHost *:80 has no VirtualHosts

(98)Address already in use: make_sock: could not bind to address 0.0.0.0:80

no listening sockets available, shutting down

Basically, because I left Apache’s default configuration in place and was using a supplementary conf file, my lines were duplicates of lines in Apache’s /etc/apache2/ports.conf file. You’ll need the Listen port lines somewhere in your Apache configuration to get things working properly, but not loaded twice if you want everything to start!

I still see this additional warning in my error.log at startup, but safely disregard it since it does not impact functionality. I leave my instance hostname as AWS has configured it.

RSA server certificate CommonName (CN) `www.myserver.com' does NOT match server name!?

9. To verify everything was working properly with my SSL certificate, I first ran a check of my website’s certificate configuration here: http://www.digicert.com/help/

10. I then doublechecked that both of these urls worked for my server, and that my apache access.log showed requests with port 80 and port 443 in use respectively.

http://www.myservernamehere.com

https://www.myservernamehere.com

Next up: getting an SSL certificate to work in my dev environment, not nearly so straightforward it turns out.